May 10 2021

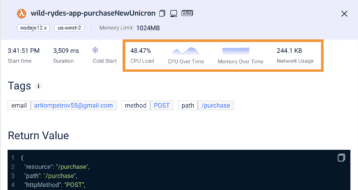

AWS announced AWS Lambda Extensions back in October 2020 and I wrote extensively about it at the time – what it is, how it works, and why you should care. In short, Lambda Extensions allow operational tools to integrate with your Lambda functions and run either in-process alongside your code or in a separate process. To better understand the problems they solve and their use cases, please read my previous article. Also, check out the Lumigo extension, which allows our platform to profile your Lambda invocations and collect additional information such as CPU load, CPU over time, Memory over time, and network usage…

Today, Lambda Extensions become Generally Available in US East (N. Virginia), EU (Ireland), and EU (Milano) regions with more regions to come in the coming days/weeks. As part of this release, AWS has also packed in some much-appreciated performance improvements!

Early return from function invocations

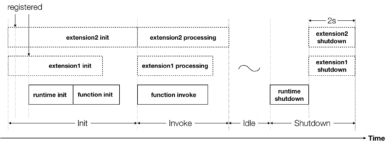

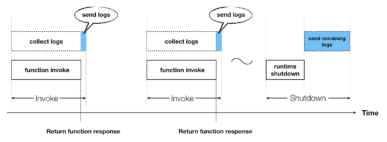

You see, when it was first released, all Lambda extensions are run in parallel to your code during an invocation and that invocation finishes when both your code and the extensions have finished (see the lifecycle below).

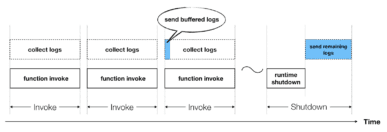

This meant that as an extension author, I have to take a great deal of care to ensure that my extension doesn’t add additional latency to your Lambda function’s execution time. Lumigo’s log-shipper extension, for instance, cannot send your log data immediately after your code finishes because that would add additional latency to your function! Instead, it buffers the logs and sends them at the start of a subsequent invocation if the buffer is full or if a sufficient amount of time has passed. This way, it doesn’t add any user-facing latency and doesn’t impact the user experience of your application.

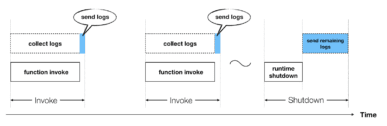

But what if you want to see your logs (or other telemetries) in close to real-time? Then you have to accept a performance hit because the Lambda invocations do not finish until the extension is done sending your logs after your code finishes.

Fortunately, with today’s update, extension authors no longer need to make this trade-off!

When the function code finishes, its response is returned to the caller right away. Extensions can continue to run after that and you will still pay for those extra execution times, but the caller can get their response earlier.

This lifecycle change applies to all Lambda extensions. You don’t have to make any changes to take advantage of this.

Considerations

There are a number of considerations to keep in mind though.

You pay for all compute time used

Even after the function response is returned to the caller, the invocation phase of the lifecycle doesn’t finish until all extensions have finished. This means that you will still pay for those extra execution times after the function code finishes.

Slow extensions can affect cold start frequency

Until the entire Invoke phase is complete, the Lambda worker is not able to accept another incoming request. If another invocation request comes in during that time, then the Lambda platform might have to create another worker to handle the request if other workers are also busy. So the longer extensions have to run after the function response is returned, the more likely that you will see additional cold starts.

Early return is not always possible

If the caller invokes a function synchronously and tails the logs from the invocation, then the function response is not returned early. This exception is because the Lambda platform returns ALL the logs from the entire Invoke phase, and the Invoke phase is not complete until the extensions finish executing.

Summary

At long last, Lambda offers background processing time. And it opens up so many possibilities for AWS partners to build extensions that take advantage of this so you no longer have to make a trade-off between performance and seeing your data quickly.

Even with the caveats in mind, this is still very exciting news to Lambda users. And I for one, can’t wait to see all the tools that will make use of this new capability. And if you haven’t yet, check out our log-shipper extension and see how you can process Lambda logs without going through CloudWatch Logs first and save on CloudWatch cost.

Our team is also hard at work making improvements to the Lumigo tracer which provides no-code distributed tracing and performance monitoring. Soon, we will eliminate the latency overhead of our tracer (which is ~10ms now) with the early-return capability. And you will be able to install the Lumigo tracer as a standalone NPM package, a Lambda layer as well as a Lambda extension!

Exciting times ahead 🙂